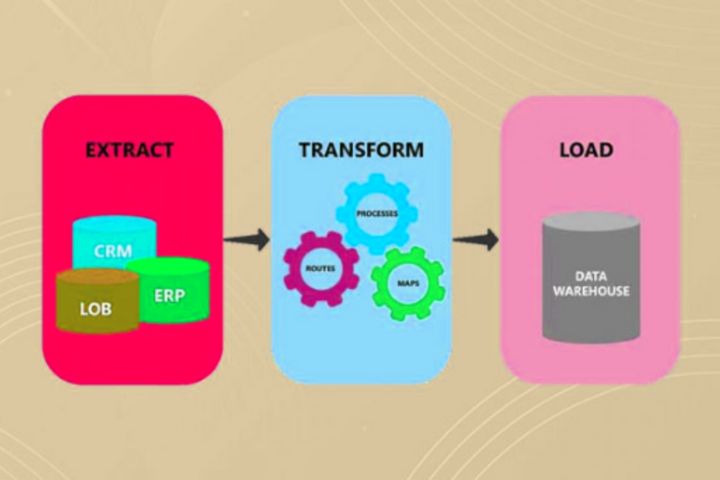

It is fast but technically more complex.ĮTL is carried out in two ways, manual ETL or no-code ETL. It is ideal for when the data is too big. Batch Incremental Loading: In the batch type of incremental loading, the data is loaded in batches with an interval between two batches.This kind of loading is best when the data is in small amounts. Stream Incremental Loading: Data is loaded in intervals, usually daily.Incremental Loading: Incremental loading, as the name suggests, is carried out in increments.It is ideal in the case when the size of the data isn’t too big. It is technically less complex but takes more time. Full Loading: All data is loaded at once for the first time in the target system.There are two different ways to carry out the load phase. Once the raw data is extracted and tailored with transformation processes, it is loaded into the target system, which is usually either a data warehouse or a data lake. These are the problems that are resolved in the transformation phase. Oftentimes we come across data that is redundant and brings no value to the business such data is dropped in the transformation phase to save the storage space of the system. Null values, if present in the data, should be removed other than that, there are outliers often present in the data, which affect the analysis negatively they should be dealt with in the transformation phase. In addition to reformatting the data, there are other reasons too for the need for transformation of the data. Sorting-data is organized in a manner that increases efficiency.Spotting outliers-outliers are spotted and normalized.Duplication Removal-redundant data is removed.Standardization-uniform formatting is applied throughout.Cleansing-inconsistent and missing data are catered for.For that, the raw data undergoes a few transformation sub-processes, such as: In the transformation phase, the extracted raw data is transformed and compiled into a format that is suitable for the target system. These sources are either structured or unstructured, which is why the format of the data isn’t uniform at this stage. CRM (Customer Relationship Management) Software.In this phase, the data is extracted from multiple sources using SQL queries, Python codes, DBMS (database management systems), or ETL tools. These business intelligence tools are then used by businesses to make data-driven decisions. It’s a three-step process that extracts data from multiple sources, transforms it, and then loads it into business intelligence tools. It ensures the integrity of the data that is to be used for reporting, analysis, and prediction with machine learning models. Methodology of ETLĮTL makes it possible to integrate data from different sources into one place so that it can be processed, analyzed, and then shared with the stakeholders of businesses. In this article, we will look into the methodology of ETL, its use cases, its benefits, and how this process has helped form the modern data landscape. Though in all these different infrastructures, one process remained the same, the ETL process. Data marts have been converted to data warehouses, and when that hasn’t been enough, data lakes have been created.

As a result, the modern data stack has evolved. Global data creation has increased exponentially, so much so that, as per Forbes, at the current rate, humans are doubling data creation every two years. This useful information is what helps businesses make data-driven decisions and grow. It is a process that integrates data from different sources into a single repository so that it can be processed and then analyzed so that useful information can be inferred from it. ETL stands for “extract, transform, load”.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed